Were you aware that 80% of enterprise workloads depend on choosing the right storage solution? AWS offers S3, EBS, and EFS for different needs. Pick the wrong one, and you’re stuck with sluggish performance or soaring costs.

This comparison analyzes AWS storage options with an emphasis on SQL Server applications. S3 offers 99.999999999% durability, EBS delivers up to 64,000 IOPS, and EFS ensures seamless scalability. These insights equip you to make an informed decision.

Let’s dive in and explore which option is right for you.

AWS Storage Options

When choosing the right AWS storage solution for your needs, it’s important to understand the different types of storage available and how they align with your specific workload requirements.

Here’s an overview of AWS’s main storage options:

- Object Storage

- AWS Object Storage, like Amazon S3, is designed for large-scale data storage that is highly durable and cost-effective. It’s ideal for backups, data archiving, and large-scale analytics.

- S3’s ability to store unstructured data and integrate with analytics services like Amazon Athena makes it a versatile choice for SQL Server backup storage and long-term data retention.

- Block Storage

- For high-performance applications like SQL Server, Amazon EBS provides block-level storage with low-latency, high-IOPS capabilities. EBS volumes are key for workloads that require fast, reliable storage, such as databases or ERP systems.

- Amazon EBS can be customized for performance with options like Provisioned IOPS SSD for mission-critical applications or General Purpose SSD for moderate workloads.

- File Storage

- File-based storage solutions, such as Amazon EFS, are perfect for applications that require shared access to files across multiple servers or instances. Whether it’s for web servers, development environments, or content repositories, EFS allows for scalable, highly available storage that can grow with your workload.

- For SQL Servers, EFS offers a simple way to store and manage shared files that need to be accessed by multiple EC2 instances.

1. S3 or Simple Storage Service

S3 is one of the most widely adopted cloud services by AWS.

Amazon created S3 as a completely new file system from the ground up, with its own set of commands for file manipulation.

It stores data as objects in a flat environment (without a hierarchy or directories).

It is an object store with a simple key-value store design.

Each object is assigned a name (Key). You can use that Key to access the item from anywhere, even directly through the internet.

The content that is stored in the object is called Value.

Each object (file) in the storage contains a header with an associated sequence of bytes from 0 bytes to 5 TB (the maximum size of an object).

The data can be stored in different “buckets”, which are logical placeholders for data, like the folders on a PC.

This service is designed for 99.999999999% (11! 9’s) of durability. It stores data for millions of applications for companies all around the world.

The number above gets overlooked, especially by beginners. But that one is quite important. The hard drive is still a part that dies first and most frequently in any PC or Server. And to that part, reliable is nothing to be sneezed at.

AWS S3 key features:

- Capabilities to append metadata tags to objects

- Configure and enforce data access controls

- Move and store data across the S3 Storage Classes

- Secure data against unauthorized users

- Run big data analytics

- Monitor data at the object and bucket levels

Note: AWS continuously evolves its services, so new features may be available by the time you read this.

Additionally, at a very basic level, S3 can be:

- A host for a company’s documents and files, or

- mapped as a file server.

These files can be encrypted with Amazon Key Management Service (KMS) keys.

Also, it can be used to host a static website’s content that can be cached using the AWS CloudFront content delivery network.

Many companies use S3 for SQL Server backups – it’s a cheap, reliable way to store them long-term.

Imagine you have weekly full backups and hourly transaction logs. Instead of paying for high-cost SSD storage, you can offload them to S3 Glacier and restore them only when needed.

2. EBS or Amazon Elastic Block Store

AWS EBS provides highly available, consistent, low-latency block storage for Amazon EC2 (Elastic Compute Cloud).

Amazon EBS is a storage system for the drives of your virtual machines (which works well for SQL Servers!).

Like traditional file systems, EBS stores data in data blocks and can be attached to different EC2 instances only.

Amazon EBS capacity planning is essential, and volume addition or expansion should be appropriately planned to overcome any unplanned outages.

If storage volume space is exhausted, then you can attach another volume (specific limit of attaching EBS volumes). Or you can increase the size of the existing EBS volume.

Typical use cases include relational and NoSQL databases like Microsoft SQL Server and MySQL or Cassandra and MongoDB, Big Data analytics engines (like the Hadoop/HDFS ecosystem and Amazon EMR), stream and log processing applications (like Kafka and Splunk), and data warehousing applications (like Vertica and Teradata).

Key Factors for Choosing the Right EBS Volume

Selecting the right Amazon EBS volume type depends on your workload’s specific needs. So, here’s what you need to have in mind:

- “IOPS” and throughput requirements for your application

- The Read vs. Write ratios

- Data type (Random or Sequential Access)

- The chunk size of data (to align the EBS volume to your application)

AWS offers four types of EBS volumes, each designed for different performance and cost needs. Here’s a breakdown of their key features and best use cases:

- Provisioned IOPS SSD (io1)

- Description: Designed for higher workload. Typical usage is for high-transactional RDBMS databases like MS SQL Server. Highest-performance SSD volume for mission-critical low-latency or high-throughput workloads.

- IOPS per volume: up to 64,000.

- IOPS per instance: up to 80,000.

- Max. Throughput/Volume: 1,000 MiB/s.

- Max. Throughput/Instance: 1,750 MiB/s

- Use cases: Critical business applications that require sustained IOPS performance, or more than 16,000 IOPS or 250 MiB/s of throughput per volume. Large database workloads, such as Microsoft SQL Server, MySQL, and Oracle.

- General Purpose SSD Volumes (gp2)

- Description: General-purpose SSD volume that balances price and performance for a wide variety of workloads. It has a baseline performance of 3 IOPS/GB.

- Max. IOPS/Volume: 16,000.

- IOPS per instance: 80,000.

- Max. Throughput/Volume: 250 MiB/s.

- Max. Throughput/Instance: 1,750 MiB/s.

- Good choice for: system boot volumes and small-medium size databases, SQL Servers, Oracle, MySQL, MongoDB, Couchbase, etc.

- Throughput optimized HDD (stl)

- Description: Low-cost HDD volume designed for frequently accessed, throughput-intensive workloads. It can be used with testing and development environments on Amazon EC2 or with applications that don’t require a lot of read/write operations.

- Max. IOPS/Volume: 500.

- IOPS per instance: 80,000.

- Max. Throughput/Volume: 500 MiB/s.

- Max. Throughput/Instance: 1,750 MiB/s.

- Good choice for: Streaming workloads requiring consistent, fast throughput at a low price, Big data, Data warehouses, Log processing, cannot be a boot volume.

- Cold HDD (sc1)

- Description: HDD volume that provides the lowest cost per GB of all EBS volume types. Designed for less frequently accessed workloads.

- Max. IOPS/Volume: 250.

- IOPS per instance: 80,000.

- Max. Throughput/Volume: 250 MiB/s.

- Max. Throughput/Instance: 1,750 MiB/s.

- Good choice for: Throughput-oriented storage for large volumes of data that is infrequently accessed. Scenarios where the lowest storage cost is important. Cannot be a boot volume

3. EFS or Amazon Elastic File System

AWS EFS is a shared, elastic file storage system that grows and shrinks as you add and remove files.

Amazon EFS offers a traditional file storage paradigm, with data organized into directories and subdirectories.

It is a highly scalable service that integrates with AWS Cloud services and on-premises resources.

It uses the NFSv4 protocol to allow a traditional hierarchical directory structure.

You can mount EFS to various AWS services and access it from different virtual machines.

Amazon EFS is automatically scalable. That means that your running applications won’t have any problems if the workload suddenly becomes higher (the storage will scale itself automatically).

If the workload decreases, the storage will scale down too.

Amazon Elastic File System was created to fulfill an applications with high workloads that need scalable storage and relatively fast output.

EFS is basically AWS’s version of a network drive – great for sharing files across multiple EC2 instances. But keep in mind, it’s not optimized for databases like SQL Server. If you need shared storage for app logs, configs, or media files, EFS is a solid choice.

There is a Standard and an Infrequent Access storage class available with Amazon EFS.

Using Lifecycle Management, files not accessed for 30 days will automatically be moved to a cost-optimized Infrequent Access storage class. This provides a simple way to store and access active and infrequently accessed file system data in the same file system while reducing AWS storage costs by up to 85%.

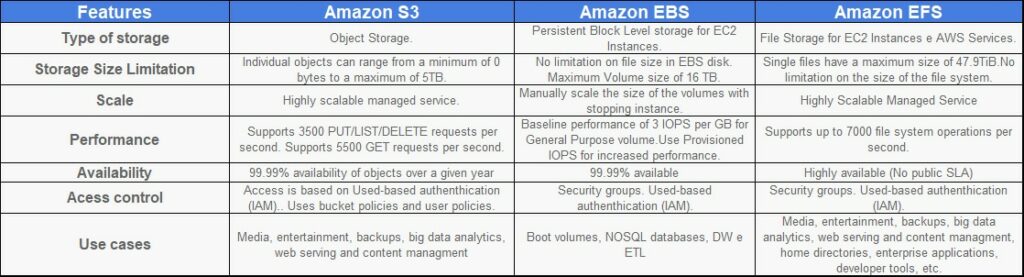

Which Amazon AWS Storage Service is Right for You?

The deciding factor between AWS storage options most likely comes down to how much you can afford to pay for storage performance that fits your needs.

Amazon S3 can be accessed from anywhere. It seems to be the cheapest for data storage.

However, there are various other pricing parameters in S3, including cost per number of requests made, S3 Analytics, and data transfer out of S3 per gigabyte. More details about pricing can be found here.

EBS and EFS provide lower latency and high IOPS, which in turn makes them better for transactional workloads, while, on the other hand, S3 excels in massive parallel reads/writes.

EBS is scalable up or down with a single API call (stopping instance).

AWS EBS is only available in EC2 instances but is cheaper than EFS. More info about EBS pricing can be found here.

EFS is best used for large quantities of data, such as large analytic workloads.

Data at this scale cannot be stored on a single EC2 instance allowed in EBS (requiring users to break up data and distribute it between EBS instances).

The EFS service allows concurrent access to thousands of EC2 instances, making it possible to process and analyze large amounts of data seamlessly.

More info about EFS pricing can be found here.

Choose the Right AWS Storage for SQL Server

AWS provides several storage options, but for SQL Servers, EBS or provisioned IOPS volumes are the top choices.

On the other hand, provisioned IOPS (io1/io2) can deliver insane speeds, but here’s the catch – it’s expensive. There were a lot of cases where companies racked up huge bills because they provisioned way more IOPS than they actually needed.

Always benchmark your workload first before going for the highest-tier storage.

Before selecting a storage type, compare costs and performance needs. General Purpose SSDs (gp2/gp3) are often sufficient for many workloads, while provisioned IOPS (io1/io2) is best for high-transaction databases that require consistent speed

If you’re unsure which option fits your workload, get in touch with Red9’s SQL experts for guidance.

What are the main differences between AWS S3, EBS, and EFS?

When should I use Amazon S3 for SQL Server storage?

How does S3 integrate with analytics tools?

How secure is S3 for sensitive data?

Can I use AWS EFS for high-performance SQL Server workloads?

Can I switch between AWS storage types easily?

What makes Provisioned IOPS SSD (io1) unique?

What workloads suit Throughput Optimized HDD (st1)?

What’s the throughput cap for General Purpose SSD?

How does EFS compare to NAS devices?

Looking for expert SQL database guidance?

Speak with a SQL Expert

In just 30 minutes, we will show you how we can eliminate your SQL Server headaches and provide operational peace of mind